The AI industry has a dirty secret that nobody in Silicon Valley wants to talk about. ChatGPT has somewhere between 800 and 900 million users, yet only about 5% of them pay for the service. Roughly 80% of those users sent fewer than 1,000 messages across all of 2025, which works out to about three prompts per day. That is not the profile of an indispensable business tool. That is the profile of a novelty that most people opened, tried a few times, and quietly stopped using.

Ben Evans, one of the most respected technology analysts working today, laid this argument out in devastating detail in his February 2026 essay “How will OpenAI compete?”. His thesis is straightforward: foundation models are commoditizing fast, ChatGPT’s engagement numbers are surprisingly thin, and the real value in AI is migrating upward to domain-specific vertical agents that actually do the work businesses need done. For any company currently evaluating how to deploy AI for customer experience, sales, or support, Evans’ analysis should be required reading, because it explains why buying ChatGPT subscriptions for your team is almost certainly the wrong move, and why investing in a vertical AI agent platform is the strategic play.

The implications extend far beyond OpenAI. They reshape how every business should think about AI procurement, deployment, and long-term competitive advantage.

The Foundation Model Commodity Trap

The most important structural fact about the AI industry in 2026 is this: six organizations now ship competitive frontier models, and their capabilities leapfrog each other every few weeks. OpenAI, Anthropic, Google, Meta, Mistral, and others are locked in a capabilities arms race where any lead is measured in weeks, not years. As Evans puts it, there is “no mechanic we know of for one company to get a lead that others could never match.”

This matters enormously for businesses making AI purchasing decisions. When you buy a ChatGPT Enterprise subscription, you are betting that OpenAI will maintain a durable advantage in model quality. The evidence suggests that bet is poor. Anthropic’s Claude regularly matches or exceeds GPT-4 on key benchmarks. Google’s Gemini is rapidly closing gaps. Open-source models from Meta and Mistral are surprisingly capable for many business tasks. The foundation model layer is compressing toward commodity pricing, and no single provider has demonstrated a sustainable moat.

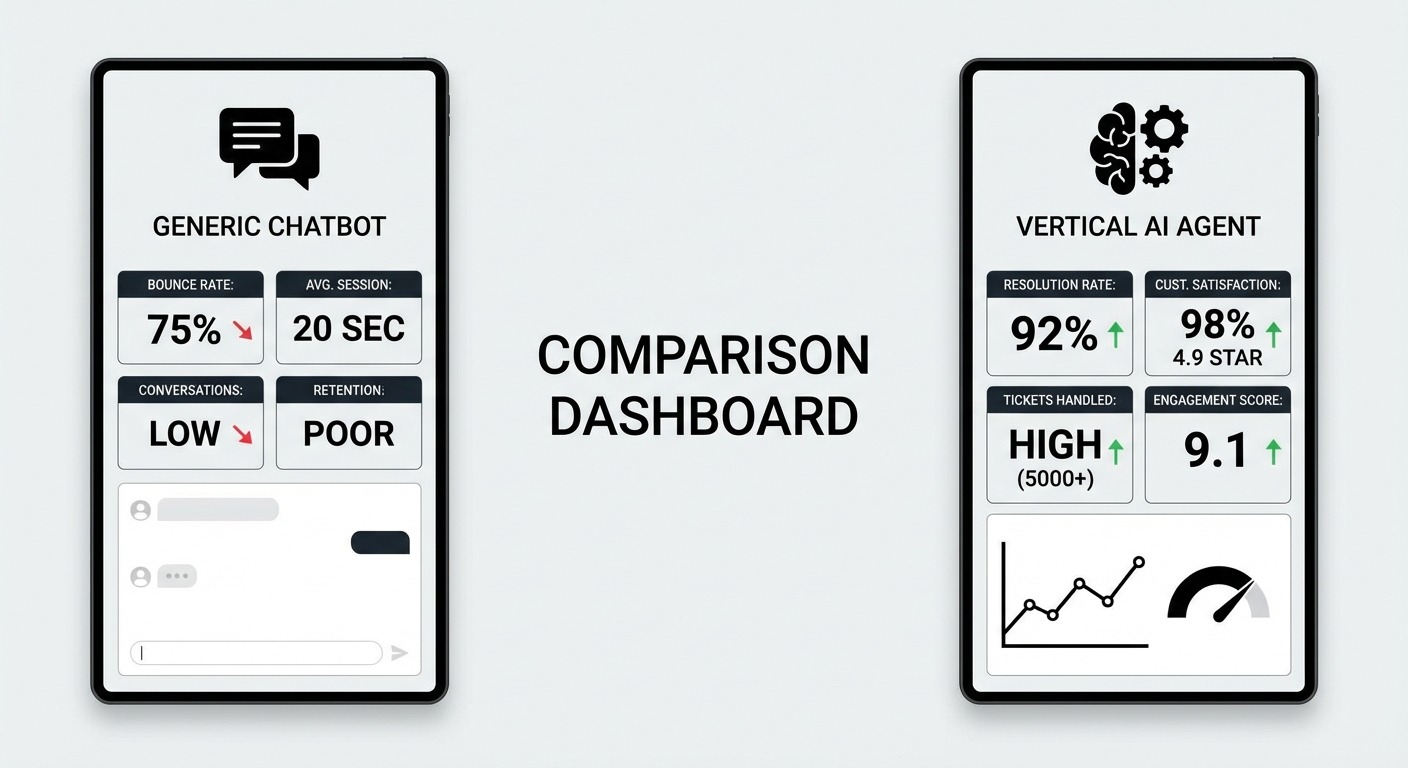

Evans’ engagement data makes the commodity argument even sharper. ChatGPT’s 800-900 million users sound impressive until you realize the vast majority barely use the product. Evans describes this as “very narrow engagement and stickiness, and no network effect.” Compare that to a tool like Salesforce or Slack, where users spend hours per day inside the product because it is woven into their actual workflows. ChatGPT sits outside the workflow. It is a tool you visit when you remember to, not a tool that runs your business.

The Netscape parallel that Evans draws is instructive and sobering. In the mid-1990s, Netscape had the best browser, the most users, and massive first-mover advantage. None of it mattered. Microsoft shipped Internet Explorer with Windows, and distribution won. Today, Google is shipping Gemini directly into Search, the product used by billions daily. Meta is embedding AI into WhatsApp, Messenger, and Instagram, platforms with billions of active users. Apple is integrating AI into Siri and iOS. Meanwhile, Anthropic’s Claude, which as the Luminary Lane analysis notes “regularly scores at the top of benchmarks,” has close to zero consumer awareness. The lesson is clear and brutal: the best model does not win. The best distribution wins.

Why a Chat Box Is Not a Business Product

Here is the core problem with ChatGPT as a business tool, and it is a problem that no amount of model improvement can fix. Evans captures it in a single devastating line: “If someone can’t think of anything to do with ChatGPT today, a better model won’t fix that.”

The issue is what you might call the capability-usage gap. Modern AI models are extraordinarily capable. They can draft legal briefs, analyze financial data, write marketing copy, debug code, and summarize research papers. But capability is not the same as utility. A general-purpose chat interface places the entire burden of value extraction on the user. The user has to know what to ask, how to prompt effectively, and what to do with the output. For most business users, that is an enormous cognitive load that produces inconsistent results.

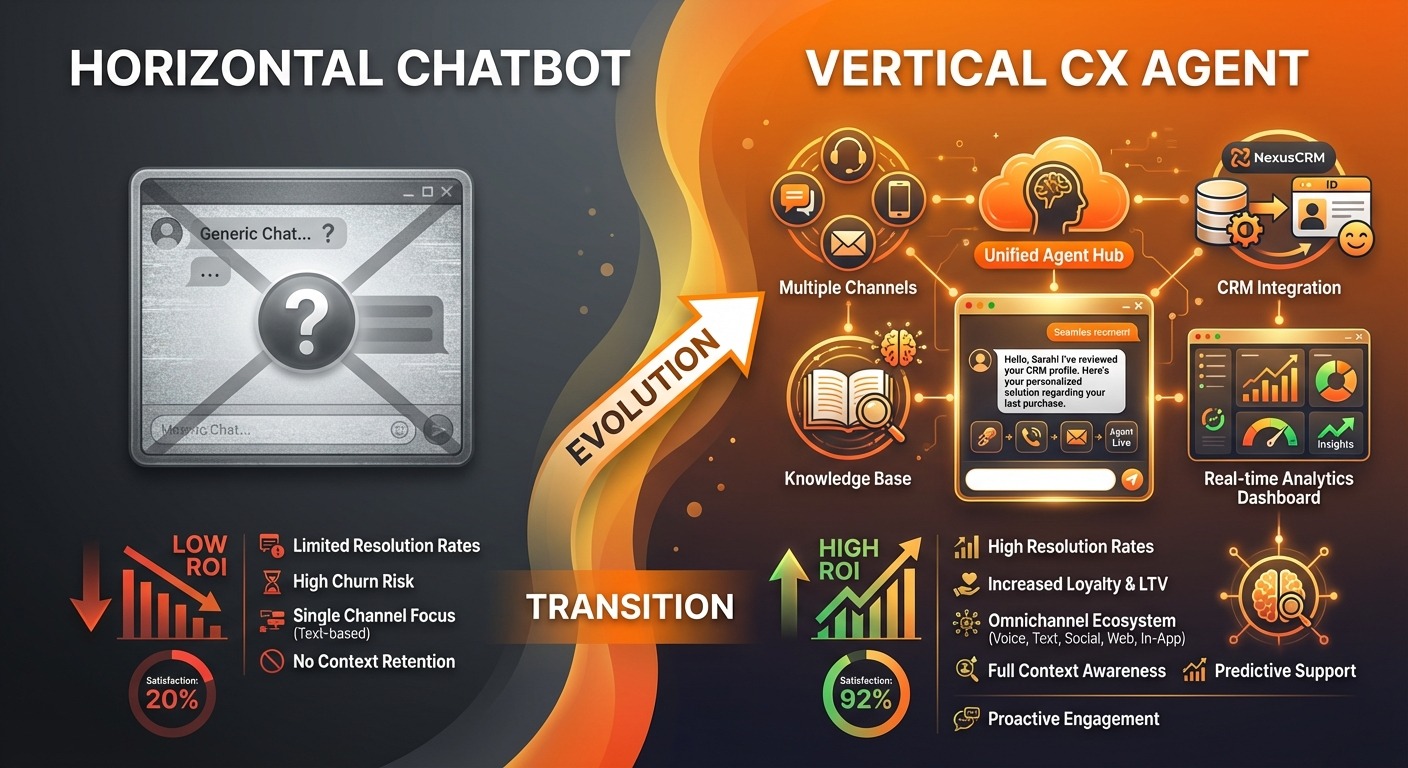

Think about this in concrete terms. A customer support manager does not need “a smarter chatbox.” She needs something that automatically routes incoming tickets based on urgency and topic, qualifies leads through structured conversation flows, books appointments without human intervention, tracks orders and provides real-time updates, and escalates complex issues to the right human agent with full context. None of those outcomes come from typing questions into ChatGPT. They come from purpose-built vertical agents that understand the domain, integrate with existing systems, and execute workflows end-to-end.

The distinction between the “copilot” approach and the “vertical agent” approach is critical for understanding where AI value actually lands. In the copilot model, the user prompts the AI, reviews its output, and then manually executes whatever needs to happen. The AI assists, but the human does the work. In the vertical agent model, the user sets strategy and reviews results, but the AI handles execution. The difference in capability utilization is enormous. A copilot approach might capture 10-20% of what the model can actually do. A vertical agent approach can capture 70-80%, because the agent is designed to apply the model’s capabilities to specific, well-defined tasks without requiring the user to be an expert prompter.

As a16z’s George Sivulka argued in his widely-cited piece “In Defense of Vertical Software,” the value is not in the model itself. It is in the process engineering: knowing how to apply the model to a specific domain’s actual workflow. That is exactly what horizontal chatbots like ChatGPT cannot provide, and what vertical agents are purpose-built to deliver.

The Three-Layer Stack: Where Value Actually Lives

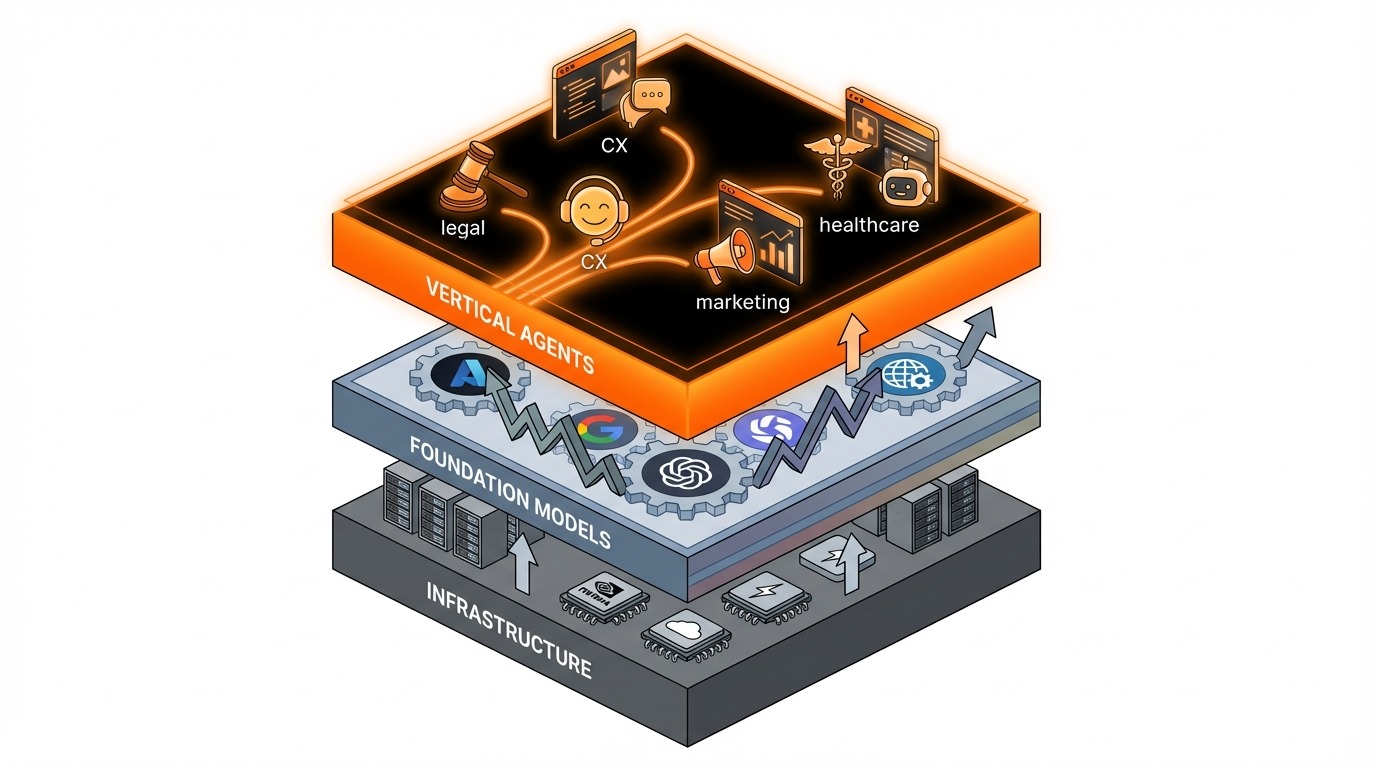

Evans’ analysis maps neatly onto a three-layer technology stack that every business leader should understand, because where you invest in this stack determines whether you capture lasting value or watch it evaporate.

The bottom layer is infrastructure: NVIDIA GPUs, TSMC chips, cloud computing platforms. This layer is capital-intensive and defensible, but it is also far removed from the end customer. The middle layer is foundation models: OpenAI, Anthropic, Google, Meta, and others. This is the layer that is commoditizing fastest, with no demonstrated moat and constant capability leapfrogging. The top layer is vertical agents and applications: companies like Harvey for legal, Sierra for customer experience, Lane for marketing, and ChatMaxima for omnichannel CX. This is the layer where domain expertise, workflow integration, and customer stickiness create durable value.

Evans drives this point home with what he calls the TSMC analogy. TSMC has a near-monopoly on cutting-edge chip fabrication, and yet that monopoly provides “little to no leverage further up the stack.” People built Windows applications, not TSMC applications. The same dynamic applies to foundation models. When developers call AI APIs to power their products, customers do not know or care what model is running underneath. They care whether the product solves their problem. You do not choose Salesforce because of its database engine. You choose it because it manages your sales pipeline. The engine is invisible.

The cloud computing parallel reinforces this pattern. AWS, Azure, and GCP commoditized infrastructure over the past fifteen years. The value did not stay at the infrastructure layer. It migrated upward to applications: Salesforce, Shopify, Stripe, HubSpot. These companies built domain-specific value on top of commodity infrastructure. AI is following the exact same arc. OpenAI, Anthropic, and Google are the AWS of AI, essential infrastructure that compresses toward commodity margins. Vertical agents are the Salesforce of AI, the application layer where durable, high-margin businesses get built.

So when a business asks “should we invest in AI?”, the real question is: at which layer? Investing at the foundation model layer means paying for a commodity. Investing at the vertical agent layer means paying for outcomes.

What a Vertical CX Agent Actually Looks Like

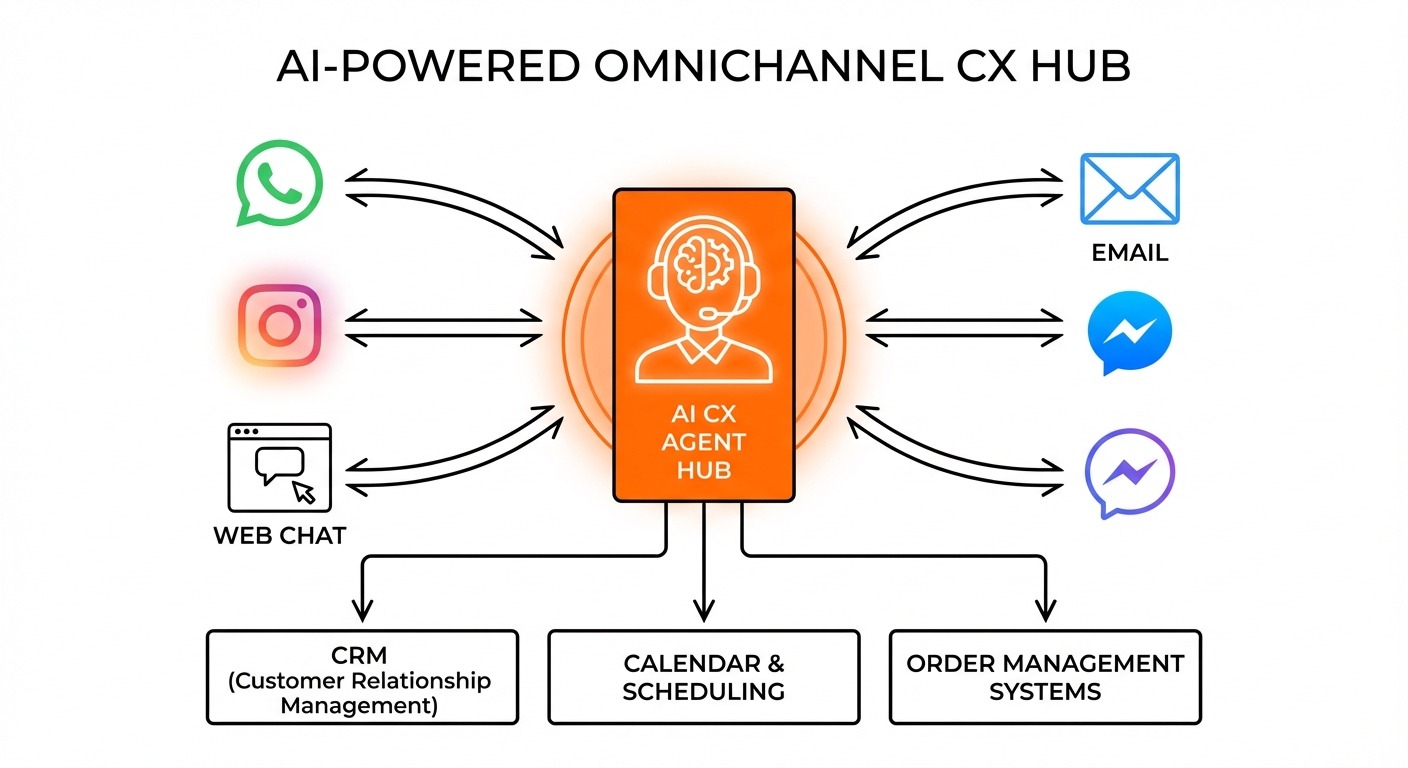

The difference between “ask AI about customer support” and “AI runs your customer support” is the difference between a science project and a business outcome. Vertical CX agents do not just answer questions. They execute complete workflows across the channels where your customers actually communicate.

Consider what a properly deployed vertical CX agent handles. It manages ticket routing, automatically categorizing incoming conversations by urgency, topic, and customer value, then directing them to the right team or handling them autonomously. It runs lead qualification flows, asking the right questions in the right sequence to determine whether a prospect is worth a sales team’s time, and booking meetings directly on the calendar. It handles appointment scheduling, order tracking, and returns processing without human intervention for straightforward cases. And when a conversation requires human judgment, it escalates with full context, conversation history, customer profile, and recommended actions, so the human agent can resolve the issue in minutes instead of starting from scratch.

Channel-native execution is where vertical agents truly separate from horizontal chatbots. A WhatsApp conversation has different constraints and opportunities than an Instagram DM or a web chat widget. WhatsApp Business API supports template messages, quick replies, and interactive lists. Instagram DMs support story replies and rich media. Web chat supports proactive triggers and embedded forms. A vertical CX agent built for WhatsApp does not just translate a generic chatbot response into WhatsApp format. It uses the channel’s native capabilities to deliver a better experience than a human agent could provide at scale.

Team collaboration is the other critical dimension. The best vertical CX agents are not about replacing humans. They are about creating a system where AI handles the 70-80% of conversations that are routine, while humans focus on the 20-30% that require empathy, judgment, or creative problem-solving. This is what a platform like ChatMaxima’s team inbox enables: a unified view where AI and human agents work side by side, with seamless handoff and shared context.

CRM integration transforms the CX agent from a conversation tool into a business operations platform. When a vertical agent resolves a customer issue, it does not just close the conversation. It updates the CRM record, triggers follow-up workflows, adjusts customer satisfaction scores, and feeds data back into the system that makes the next interaction smarter. This is the kind of deep workflow integration that a ChatGPT subscription simply cannot provide, and it is why businesses that have deployed vertical CX agents with proper integrations see dramatically different results than those experimenting with generic AI chat tools.

Model-Agnostic Is the Real Moat

If foundation models are commoditizing, then betting your business on a single model provider is a strategic mistake. The smart play is to build on a platform that treats models as interchangeable components, swappable based on performance, cost, and capability without disrupting your workflows.

This is exactly Evans’ point crystallized into product strategy. When he says “customers don’t know or care what model you used,” he is describing the future of enterprise AI procurement. Businesses will not buy models. They will buy outcomes. And the platforms that deliver those outcomes will swap models underneath as frequently as a taxi company swaps engine parts.

ChatMaxima supports OpenAI, Anthropic Claude, Google Gemini, DeepSeek, Groq, Sarvam AI, Azure AI, Amazon Bedrock, and OpenRouter. When GPT-5 launches, you switch in minutes. When Claude beats it on your specific use case next month, you switch again. When a new open-source model delivers 90% of the quality at 10% of the cost for your routine queries, you route those queries accordingly. Your workflows, your training data, your channel configurations, your CRM integrations, none of it changes. The model is the engine, not the car.

This model-agnostic architecture is not just a technical convenience. It is a fundamental strategic advantage. Companies locked into a single model provider face three risks: pricing risk when that provider raises rates, performance risk when competitors leapfrog their model, and availability risk during outages or capacity constraints. A model-agnostic platform eliminates all three. For a deeper comparison of how different AI models perform across conversational AI use cases, the performance differences are often smaller than the marketing would suggest, which further validates the model-agnostic approach.

Distribution Is the Moat That Matters

Evans’ Netscape analysis has a direct corollary for business AI. If distribution beats product quality in consumer AI, the same logic applies to business CX: the platform that is already deployed on the channels where your customers communicate has a massive advantage over a general-purpose chatbot that you access through a web browser.

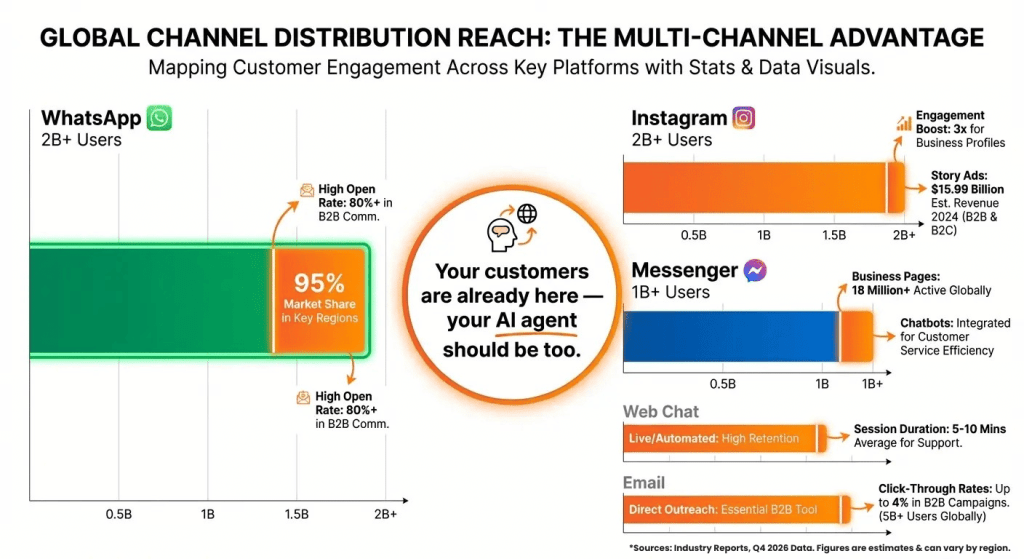

Google ships Gemini inside Search. Meta ships AI inside WhatsApp, Instagram, and Messenger. Apple ships AI inside Siri. These companies understand that distribution is the moat. For business customer experience, the distribution channels are WhatsApp with over 2 billion users, Instagram with over 2 billion users, Facebook Messenger, web chat, email, and SMS. These are the surfaces where customer conversations happen. A vertical CX agent that is already deployed across these channels, already integrated with WhatsApp Business API templates, already handling Instagram DM automation, already running web chat widgets on your site, that agent has a distribution advantage that no ChatGPT subscription can match.

This is why the question “should we use ChatGPT or a vertical CX agent?” is actually a distribution question in disguise. ChatGPT lives at chat.openai.com. Your customers live on WhatsApp, Instagram, and your website. A vertical CX agent built for multi-channel deployment meets customers where they already are, rather than asking them to go somewhere new. The best conversational AI applications in 2026 all share this characteristic: they are embedded in the channels and workflows where work already happens.

Businesses do not need to build this distribution from scratch. They need to plug into it. That is the value proposition of a vertical CX platform: pre-built channel integrations, pre-configured workflow templates, and pre-trained domain models that let you go live in days rather than months.

Why Businesses Need Vertical Agents, Not ChatGPT Subscriptions

The economics tell the story. ChatGPT Pro costs $200 per month per seat for a general-purpose tool that your team may or may not use effectively. Multiply that across a 20-person support team and you are spending $48,000 per year on a tool that helps people do work, rather than a tool that does the work.

A vertical CX agent from a platform like ChatMaxima costs a fraction of that and delivers measurably different outcomes. Instead of paying for “intelligence” that your team has to figure out how to apply, you are paying for a system that routes tickets, qualifies leads, books appointments, tracks orders, and escalates intelligently, all without requiring your agents to become expert prompters.

The decision framework is straightforward. If your need is general intelligence across many domains, for research, writing, and brainstorming, ChatGPT is a reasonable personal productivity tool. If your need is domain execution in a specific business function, for customer support, sales qualification, appointment booking, and order management, a vertical agent is the right investment. Most businesses making AI purchasing decisions are in the second category, even if the marketing from OpenAI makes them think they are in the first.

Evans’ prediction is playing out in real time. Vertical agents are capturing the high-value, high-margin segments of the AI market. Foundation model providers are compressing toward commodity margins, competing on price and benchmark scores in an endless race with no finish line. The companies that understand this distinction early will deploy AI that delivers measurable ROI. The companies that do not will spend the next two years buying ChatGPT seats, wondering why their “AI transformation” is not transforming anything, and eventually arriving at the vertical agent conclusion anyway, just later and at greater cost.

For businesses that need AI to actually run customer experience operations, the right question is not “which model should we use?” It is “which platform lets us build domain-specific workflows that work across every channel our customers use, with the flexibility to swap models as the landscape shifts?” That is the question a conversational AI assistant built on vertical principles is designed to answer, and it is a fundamentally different question than “should we upgrade to ChatGPT Pro?”

What This Means for Your Business

The structural shift from horizontal chatbots to vertical AI agents is not a prediction. It is happening now, and the window for early-mover advantage is narrowing. As Evans’ analysis makes clear, the foundation model layer is commoditizing fast, and the value is migrating to the application layer where domain expertise, workflow integration, and channel distribution create sticky, defensible businesses.

For any organization running customer experience operations, the action items are clear. Stop evaluating AI based on model benchmarks and start evaluating it based on workflow coverage. Ensure your platform is model-agnostic so you are never locked into a single provider’s pricing or performance trajectory. Deploy on the channels where your customers already communicate, not on a separate AI interface they have to learn. Integrate deeply with your CRM, ticketing, and scheduling systems so every AI interaction generates business value beyond the conversation itself. And do it with proper guardrails to ensure AI agents operate within the boundaries your business requires.

The AI vendors fighting over model supremacy are fighting the wrong war. The real war is at the application layer. And for business customer experience, the vertical agents have already won, most companies just have not realized it yet. The ones that figure it out first will build a compounding advantage in customer satisfaction, operational efficiency, and cost structure that their competitors will struggle to replicate.

If you are ready to see what a vertical CX agent looks like in practice, explore ChatMaxima’s platform or review the pricing to understand how model-agnostic, multi-channel AI deployment works at a fraction of what you would spend on ChatGPT seats for your team. The best time to make this shift was six months ago. The second best time is now.